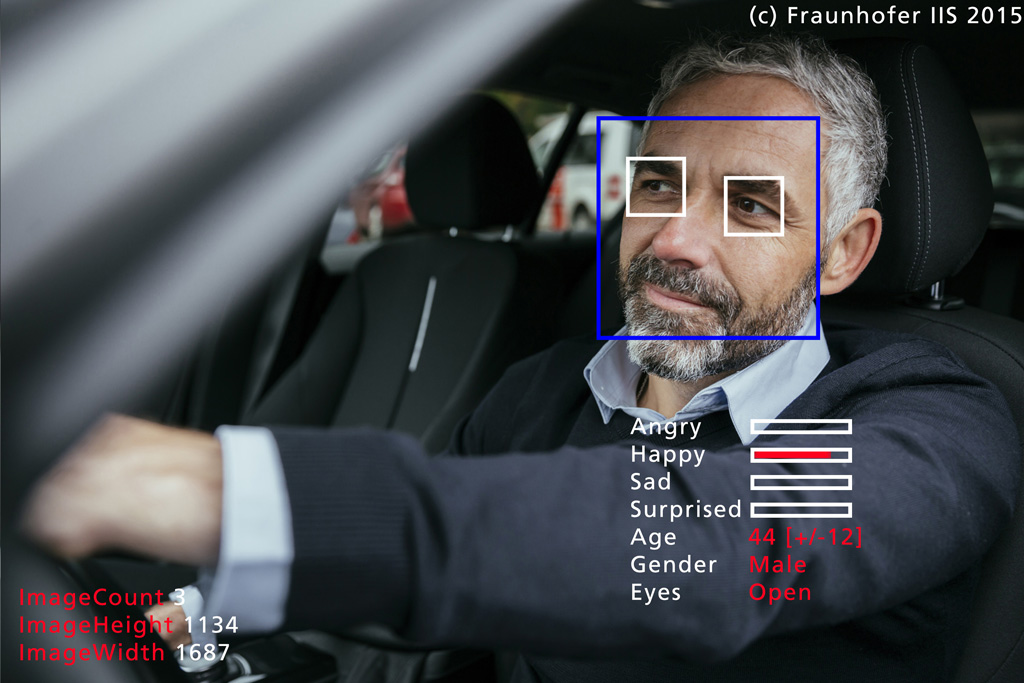

Increasing complexity and stringent requirements mean that user interfaces are playing a major role in automated driving because they must support several functions, process a great deal of information and offer a high degree of usability. But there are still limits to the natural interaction between occupants and vehicles, especially when it comes to switching between or combining the various modes and functions (facial expressions, speech, lighting, etc.). This is precisely where the SEMULIN project comes in. To develop a human-focused HMI with tailored system architecture, we are investigating all available modes with a view to intelligently interpreting and consolidating the aggregated sensor data. This involves harnessing established technologies, including SHORE® – our face detection and analysis software designed for video-based emotion recognition.

Machine learning and artificial intelligence methods and their multimodal applications help make assertions about the driver’s condition, identify their intentions and derive their potential reactions. The aim of new approaches such as interactive learning is to allow the system to continuously adapt itself to the user’s needs, both to enhance interaction and increase user acceptance. Our development process incorporates ethical, legal and social implications (ELSI) as well as psychological models.

The SEMULIN project was launched on November 1, 2020 and is scheduled to run until October 31, 2023. The project consortium is made up of Elektrobit Automotive GmbH (project coordinator), Fraunhofer IIS (Smart Sensing and Electronics; Audio and Media Technologies) and five other industry and university project partners. The SEMULIN project is funded by the German Federal Ministry for Economic Affairs and Energy (BMWi).